At Simply Contact, we specialize in creating personalized customer support solutions that drive business growth and customer satisfaction. Let us help you elevate your customer experience and stand out from the competition.

Responsible AI in Customer Support: Build Trust or Lose Customers

Just imagine a situation we all know too well: a customer reaches out to support regarding a billing problem, and instead of being stuck in a queue for ages, a chatbot jumps in right away. It looks like a perfect solution for omnichannel support: no waiting, no need to interact with human agents, and customers just get fast resolution.

But another problem occurs if the response is incorrect. The customer tries to explain the situation, but the system just repeats the same scripted scenario. There is no option to escalate the conversation or a clear way to reach a human agent. If there is no human oversight in AI support, users get stuck with no way to find an escape or a solution. Eventually, the customer gives up and leaves frustrated.

AI in customer service moves fast, but operational governance isn’t keeping up. Most teams are focused on automation rates, deflection metrics, and chatbot containment. These indicators measure efficiency, but they rarely address accountability. Fewer teams design their chatbots for safe AI escalation paths. It requires meaningful human oversight, transparent communication, and accountability for decisions. Responsible AI in customer service is a system design problem, and today we will discuss how to address it.

The operational challenge of responsible AI in customer service

The AI in the customer service market is projected to hit $83.8 billion by 2033 and the race to automate is already well underway.

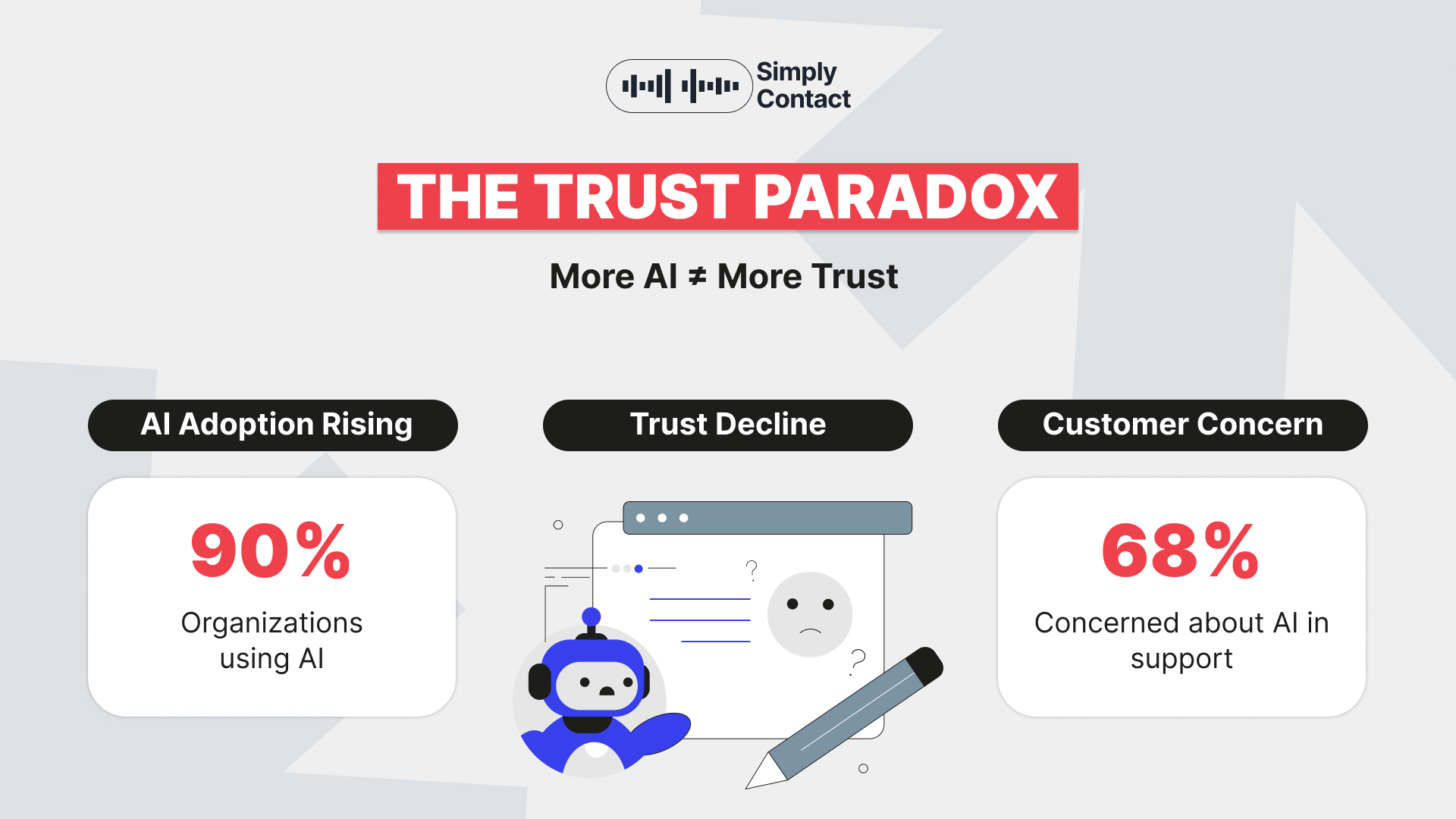

More than 90% of organizations use AI and nearly 80% of customer experience leaders are actively increasing AI budgets. Moreover, automated systems already handle 30-40% of basic support interactions across many organizations. The tools are everywhere.

- AI chatbots field routine questions around the clock.

- Automated ticket classification routes requests before a human even reads them.

- AI knowledge base assistants surface answers in real time so agents never miss a beat.

- Voice bots handle basic phone inquiries, freeing teams for the complex, high-stakes conversations that actually require human judgment.

But most of these deployments were only optimized for speed and cost savings. And that gap leads to trust issues, as of today 68% of customers are concerned about AI in support.

Where does AI break customer trust?

AI applications in customer service can significantly increase efficiency and improve CX, but you always need to consider several critical situations in which AI-driven support systems can damage customer trust.

| Challenge | Description | Risks |

| The Escalation Gap | Systems used in AI in customer service automation often handle simple requests but struggle with emotional frustration, complex multi-step issues, or regulatory cases. | Delayed escalation, unresolved issues, customer dissatisfaction |

| Context Blindness | In many generative AI use cases in customer service, AI may ignore purchase history, loyalty status, past complaints, or compliance signals. | Poor decisions, irrelevant responses, damaged customer relationships |

| Invisible Automation | As generative AI use cases in customer service expand, customers may not realize they are interacting with automation or know how to reach a human agent. | Reduced trust, frustration, lack of transparency |

| Agent Over-Reliance | One of the common AI misconceptions in customer service is assuming AI always improves decisions, leading agents to follow automated suggestions without critical thinking. | Incorrect decisions, operational errors, escalated problems |

How to make responsible AI a part of support infrastructure?

Artificial intelligence has already become an essential part of customer service, but only responsible AI use can serve as a base for outstanding customer service. Let's review the steps you can use to upgrade your AI customer support approach.

What responsible AI support actually looks like?

AI solutions in customer service require smart operational design and a balance between speed and accountability. As Iryna Shevelova, expert in networking culture and the founder of Collabro, with extensive experience in systematization, evaluation, and service quality improvement, puts it:

“Responsible AI means how thoughtfully we design CX when automation is involved. Artificial intelligence as a customer support system should help customers and avoid adding more frustration. Our mission is to ensure that AI responses are reliable and relevant for user requests. Transparency is an integral part of customer trust.

When interacting with a chatbot, your customers should always know that they are talking to an automated system. And human teams need to control AI’s performance and the quality of its responses. AI requires control and robust data protection measures. Responsible AI use means earning and keeping customers’ trust while implementing artificial intelligence into the high-level support experience.”

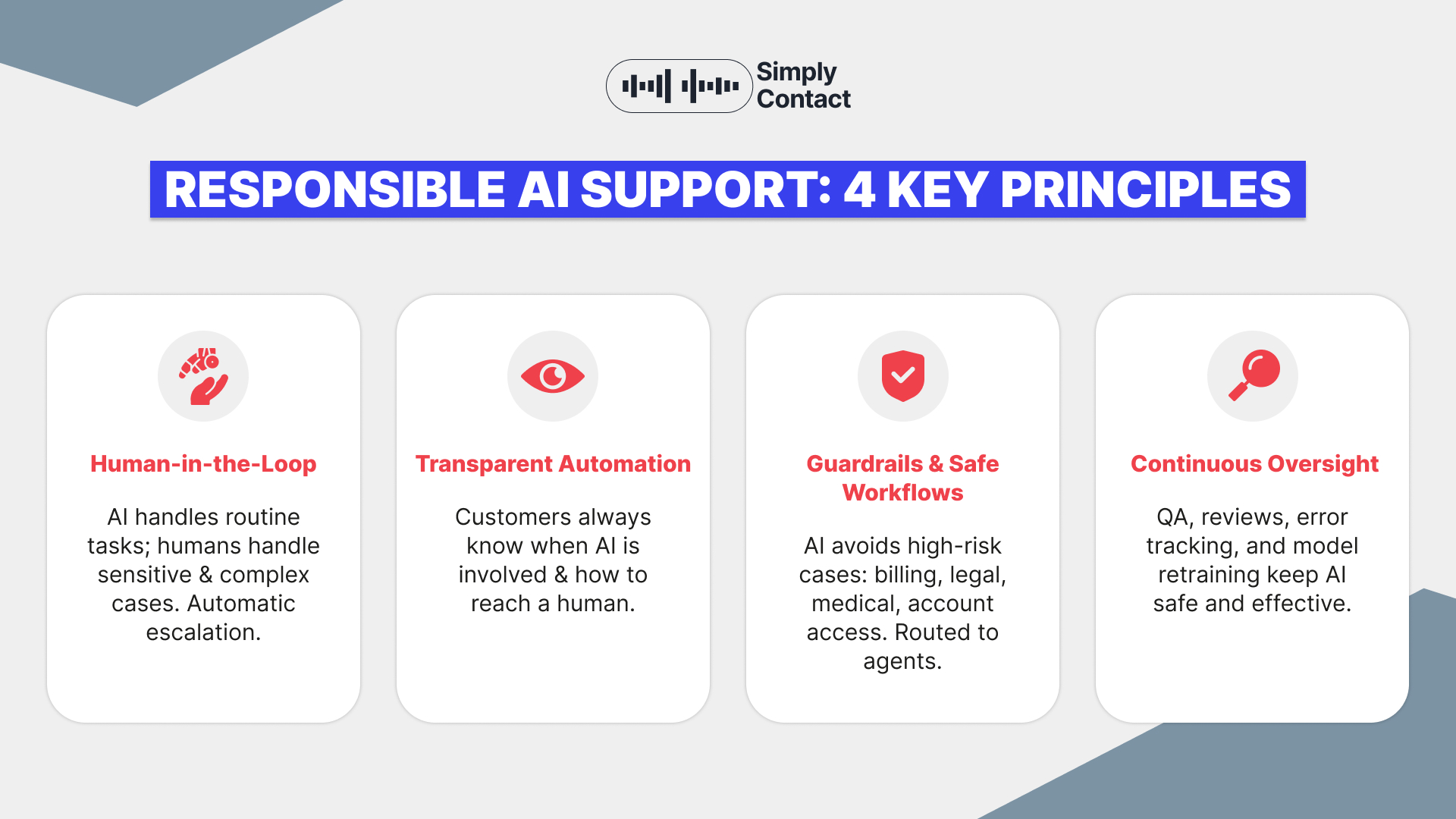

Responsible support automation is defined by its structures, here are the four main structural elements.

Human-in-the-loop architecture

One of the most important principles of AI structure is human oversight in automated workflows. The future of AI in customer service doesn’t mean the full replacement of humans on support operations; human teams should stay in control and get AI-driven augmentation to work more smoothly and faster.

AI can handle routine, repetitive interactions, such as simple account questions or order status requests. When situations require human judgment or empathy, a human agent should step in and handle the rest.

For instance, companies need automatic, fast escalation when conversations are emotionally charged or involve sensitive cases, such as financial matters. In these situations, an automated response can cause legal or reputational risks and should not be handled by AI.

The best escalation system from AI to human agents is automatic and immediate upon detecting certain conditions. Customers should not be trapped in endless automated conversations, especially when critical issues arise.

Transparent automation

Many organizations considering how to use AI in customer service try to make automation invisible to customers, but they forget that transparency is the best foundation for customers’ trust. Expert tip: Customers should always clearly understand when they are interacting with chatbots and know how to switch to a human representative if needed. Frustration is much lower when a customer knows that they have control over the situation.

If users understand well what AI can or cannot do, they can set their expectations better and ask for human agents if anything is beyond AI’s capabilities. AI governance in CX operations should be built on transparency to improve trust in the brand.

Guardrails and safe workflows

Operational guardrails are essential for AI-powered customer support. Despite the benefits of AI in customer service automation, not all inquiries should be handled automatically.

For instance, AI should avoid responding to billing issues and medical-related situations. In our opinion, legal complaints and account access cases should be handled by trained professionals. Instead of trying to solve these cases autonomously, well-programmed systems will instantly route them to a required expert. AI guardrails in customer service limit automation in sensitive scenarios and prevent automation failures.

Continuous oversight

People believe that once AI systems are integrated, they can work independently. But responsible AI in customer service requires continuous operational monitoring, including QA. For effective implementation, a governed infrastructure and AI output reviews are a must-have.

Efficient use of AI in customer service is impossible without error tracking and regular model retraining. It is the road of constant learning and improvement. Artificial intelligence is not a “set and forget” system. A strong AI governance framework helps maintain customers' trust and regulatory compliance.

Conclusions

Responsible AI in customer service will continue to transform approaches to CX and reshape support operations in the coming years. New technologies will emerge, and companies will further experiment with advanced capabilities to improve agentic AI in customer service. However, automation without governance creates risks, and unsupervised AI can produce incorrect answers. Also, invisible AI support and unclear paths to human agents can frustrate customers.

The leading brands are not the ones that automate the most. The competition is won by the companies that design AI systems customers can trust. Responsible AI systems require clear structures that include safe escalation paths and constant human oversight.

They should be built on transparency and operational accountability. The question “How much can we automate?” should not be your guiding star. Ask yourself if your AI support system is designed to protect customer trust.

At Simply Contact, we make our clients' trust and loyalty our priority; this is why we are always glad to collaborate with companies seeking to design safe, AI-driven support operations.

Get fast answers to any remaining questions

Thank you.

Your request has been sent successfully.

Your request has been sent successfully.